- Inside ABOUT YOU: How automated testing and continuous integration help us to ensure system…

INSIGHTS Inside ABOUT YOU: How automated testing and continuous integration help us to ensure system…

ABOUT YOU TECH

Vol. 9: Oliver, Software Developer Backbone

Inside ABOUT YOU: How automated testing and continuous integration help us to ensure system stability

Get to know our growing family. Follow our Inside ABOUT YOU blog series and catch a brief glimpse of the people behind Germany’s most exciting e-commerce project!

Introduce yourself and tell us a little about your team and especially your role at ABOUT YOU.

Hi, I’m Oliver. I’m 26 years old and work for ABOUT YOU since June 2018. Due to my mainly backend oriented past, I was integrated into the “BABO Core”-Circle of the BABO Unit. In other projects, I’ve gained a lot of experience in test driven development (TDD) and the laravel framework so I quickly came to terms with the test topic that will be the content of this interview. The tasks of our circle are not only to fix bugs and develop features, but also to monitor and profile the current project and care about its stability as many other systems depend on it. There are no fixed roles among the developers in our team, but everyone has a lot of experience with their topics. For example, the developers who have been at ABOUT YOU for a longer time, support the new developers in getting into the system. I have the feeling that everyone can evolve to the topics that interests them and I think that’s one of the reasons why I got into the testing topic.

Which challenges or problems did you face when you joined the team?

When I started working at ABOUT YOU, I faced a huge codebase, which was still growing pretty fast. Since many other teams rely on the system we’ve developed, we get a lot of change and feature requests. Our main task are making the system as flexible as possible, so it fits for all our clients and to add new features. Due to rapidly changing code, we had some unattended tests, which lead to a not passing testsuite. In major projects like ours, a high frequency of changes can cause a vulnerability to bugs that can cause problems in some edge cases or even in the normal process. This vulnerability is quite bad for a core system because invalid behavior affects other teams who automatically start investigating their problems, resulting in loss of time, money and motivation in the whole company.

So how did you and your other colleagues solve the problem and what improved since you joined ABOUT YOU?

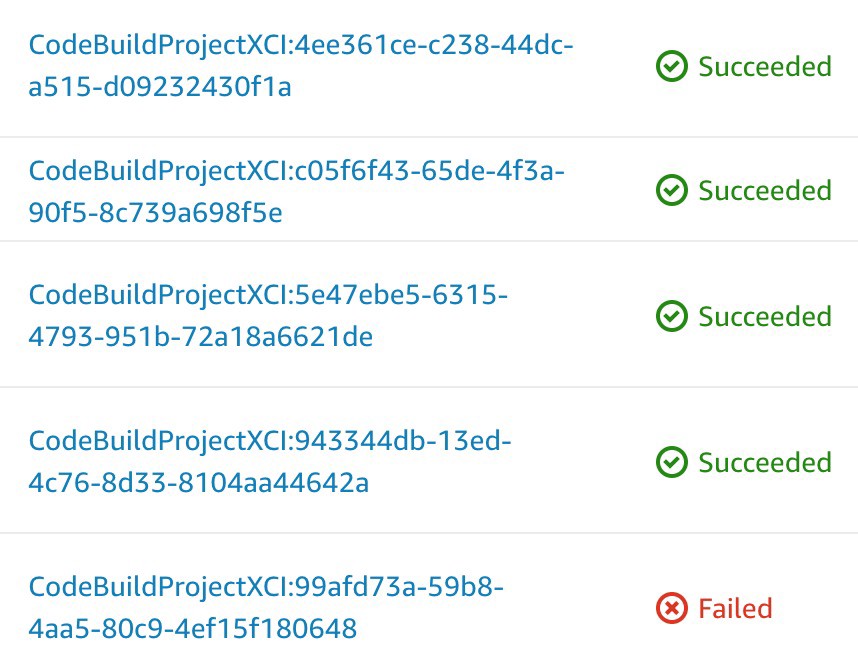

In my past, I have mainly developed fully test-driven projects. One of the advantages of a successful testsuite with a good code coverage is that bugs caused by new features but affecting the old functionality are often found automatically. When I started working at ABOUT YOU, there were plans to use AWS Codebuild as a continuous integration and let the tests run before each deployment. So I worked together with another colleague who implemented Codebuild in another ABOUT YOU project and already built a docker container that we could use for our tests. While he was taking care of the AWS part, I fixed the tests on the code side. After the tests were passed again, we brought the integration live and the result was a new rocketchat channel where a bot reports faulty tests and notifies the responsible developer via email. It is triggered by each pull request to the master branch and by any push to our staging system. During the development of a new feature, any developer can manually trigger the Codebuild for his development branch at any time to ensure that the testsuite will pass when the pull request is created.

Which issues did you face in the process?

It was quite difficult to get the tests working in the beginning because I just started working with our BABO-System so I didn’t know all the functions and features from the beginning. So often debugging old tests was more about getting information from other developers about certain functions and adjusting assertions to the new requirements.

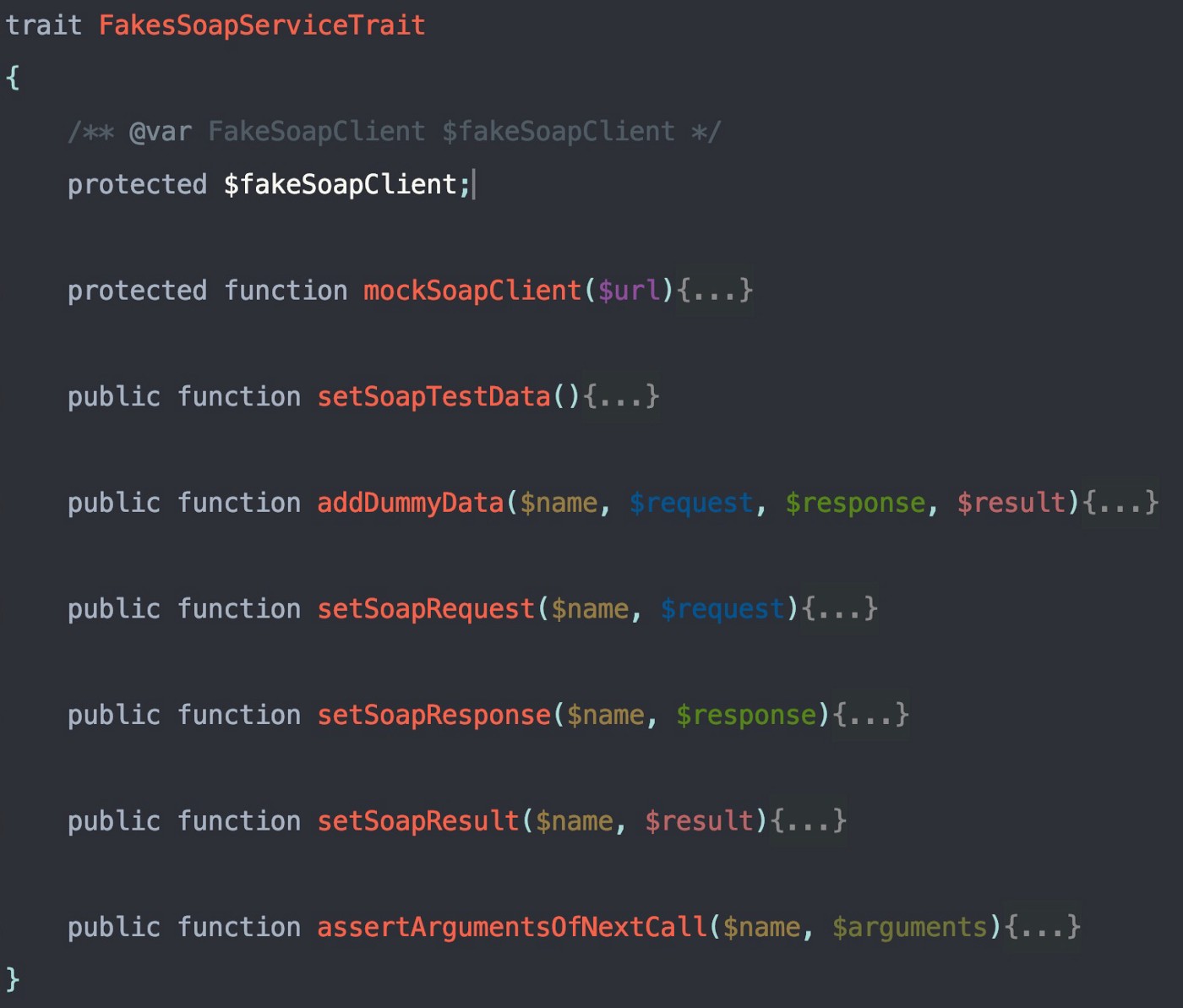

Another problem was that we used external services like curl to get data via external requests. To get the tests working without accessing external servers, I had to move these functions to their own service classes and mocked them afterwards to make custom claims that the expected request was sent and to provide the required data the server would normally return.

There was also a problem with the development time. Fixing the tests was quite time consuming so I often had to rebuild the branch I was working on, which was sometimes painful as the other developers continued the work as before and coded like the service classes had not been merged with the master yet.

After we introduced the continuous integration (CI), the team was not used to it, of course . So I often had to fix tests to turn them back to green. It took some time to get everybody to a workflow where they ensured that the tests were passed before creating a pull request or merging code to the staging environment.

What is the current state of the testsuite and the CI?

The CI still works as described and I am very happy with the workflow. Everyone takes care of the tests including developers from other teams, who are pushing into our repository. All know about the e-mail notifications and the testsuite and are fixing the tests quickly.

To improve the test suite, I have optimized the execution time of some slow tests we had, so we can run a bunch of tests over and over to ensure nothing else breaks while we write our code.

Since all of our developers are now writing tests, our new features have excellent codecoverage and the integration of new features is much easier. Therefore, the whole system is more stable and we were able to fix a lot of bugs before we deployed them, because the build broke and we were able to see the exact location where the code failed. The process improved our efficiency and recently other teams started to follow it.

Can you provide some technical details?

Our current test structure contains unit tests and functional tests, where functional tests are divided into API tests and command tests. Currently, we provide three different APIs for other teams so we have to make sure that all of them are working as expected after a new feature is deployed. We take care of a huge database and have a large scheduling and data processing system, which is based on laravels artisan commands. Manual testing is often very difficult and sometimes impossible but in the test case we are able to build huge database structures and assert the results after triggering the artisanal command. In particular, this includes commands ,which work with images or downloading data from external systems. Also assertions about queues like amazons SQS and Caching Services like redis are very difficult to test by hand so we had to build a test framework which is containing custom fake classes for often used services like SNS, SQS and S3 from Amazon web services and build in functions like the SoapClient class of php and the curl functions. We have also developed a test listener, which can be enabled to get extended logging or performance data of the tests. This helps to eliminate slow tests or to find complex errors . Another part are some useful traits, for example to quickly load and parse json files, import sample data into specific parts of the database or rapidly swap in the fake services at the right locations.

Thank you, Oliver! :)